Have you ever found yourself confiding in a chatbot, perhaps about a tough day or a nagging worry? While experts often caution against treating AI like a therapist, many people are turning to tools like ChatGPT for comfort and advice. This has led to significant scrutiny, especially following incidents where users reportedly experienced severe distress after interacting with the AI. Now, OpenAI is introducing a voluntary safety feature designed to offer a crucial layer of support.

Bridging the Gap with a Trusted Contact

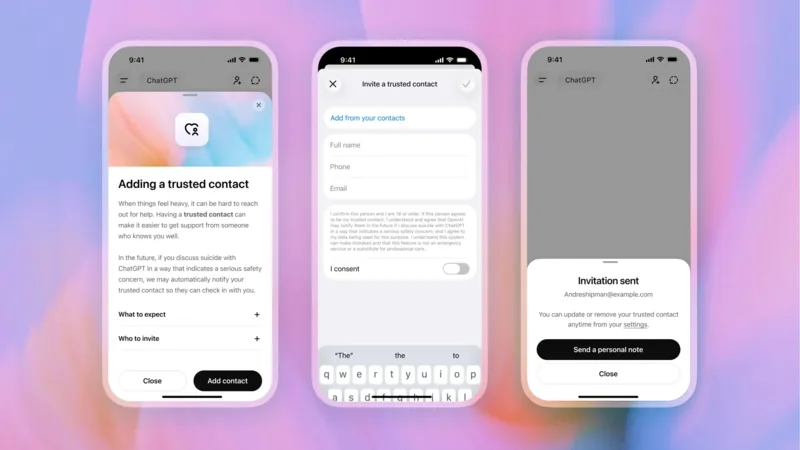

OpenAI has launched a new feature called Trusted Contact. The core idea is simple yet powerful: you can designate a trusted individual in your life to be notified if ChatGPT's systems detect you might be in a concerning state regarding self-harm. This isn't about sharing your personal conversations; rather, it's a safety net designed to encourage connection when it's needed most.

Imagine you're going through a difficult period and find yourself discussing these feelings with ChatGPT. The AI's advanced safety protocols might flag your conversation as potentially serious. Instead of just ending the chat, ChatGPT can now reach out to your chosen contact, gently prompting them to check in on you.

For example, Sarah, who has been struggling with anxiety, decided to set up this feature. She chose her sister, Emily, as her Trusted Contact. One evening, after a particularly distressing conversation with ChatGPT about her fears, the AI informed Sarah that it might contact Emily. It also provided Sarah with conversation starters to reach out to Emily herself, making that first step less daunting.

This system isn't entirely automated. A specially trained team at OpenAI analyzes flagged situations. If they confirm a serious safety concern, your Trusted Contact will receive a notification--via email, text, or an in-app alert if they also use ChatGPT. Importantly, these notifications are general, preserving your privacy by not sharing specific chat transcripts or summaries. The goal is simply to let your contact know you might need support and encourage them to reach out.

Setting it up is straightforward. You select someone 18 years or older (19 in South Korea) and send them an invitation. They have a week to accept. If they agree, the system is active. Think of Mark, who set up a Trusted Contact with his best friend. When Mark was going through a rough patch and expressed thoughts of hopelessness to ChatGPT, his friend received a discreet notification and reached out with a simple, "Hey, thinking of you. Everything okay?" This small gesture made a big difference.

OpenAI states that their team aims to review safety notifications in under an hour. The feature was developed with input from mental health professionals and suicide prevention organizations, emphasizing a commitment to responsible AI development. It's entirely voluntary, meaning you must opt-in. But for those who choose to use it, this feature could offer a vital bridge, connecting users with their real-world support systems during moments of vulnerability. It underscores how chatgpt can now reach beyond its digital confines to foster human connection.

The initiative highlights a growing awareness within AI development about the profound impact these tools can have. While AI isn't a replacement for professional help, features like Trusted Contact represent a step towards mitigating potential risks and ensuring that users have avenues for support when they need it most. This shows that chatgpt can now reach out in a way that prioritizes user well-being, making the technology a more responsible companion.

Ultimately, the effectiveness of this feature hinges on open communication and the willingness of individuals to engage with it. By allowing chatgpt can now reach your trusted circle, you're empowering your support network to be there for you, even when you find it hard to ask. It's a quiet innovation, but one that could profoundly impact user safety and well-being. As AI continues to integrate into our lives, understanding how chatgpt can now reach us and our loved ones is becoming increasingly important.