Imagine a world where your glasses don't just correct your vision, but enhance your perception, offering instant insights and capabilities beyond human intuition. This isn't science fiction anymore. Meta is pushing the boundaries with its latest AI advancement, Muse Spark, aiming to bring a form of 'personal superintelligence' directly into your everyday life, starting with its smart glasses. It’s a bold leap from a simple chatbot to a truly integrated digital assistant.

The Dawn of Personal Superintelligence

Meta's new AI, dubbed Muse Spark, is more than just an upgrade; it's a fundamental shift in how we interact with technology. Calling it a step towards 'super intelligence,' Meta has unveiled a 'natively multimodal reasoning model' designed to go far beyond the capabilities of current chatbots. This powerful AI will soon be accessible not only through the Meta AI app but also integrated into the upcoming update for their smart glasses, promising a seamless blend of digital assistance and physical reality.

What truly sets Muse Spark apart is its sophisticated reasoning engine. Unlike static AI models, Muse Spark offers users control over its 'thinking' depth through three distinct modes:

- Instant Mode: Perfect for quick queries and casual conversations, providing immediate answers.

- Thinking Mode: Designed for more complex challenges, this mode tackles problems in subjects like math, science, and logic.

- Contemplating Mode: The pinnacle of Muse Spark's abilities, this mode activates multiple AI agents working in parallel. They collaborate to solve intricate, multi-step tasks, offering a level of problem-solving previously unimaginable in a wearable device.

Meta claims Muse Spark’s performance rivals or surpasses their Llama 4 Maverick model, all while consuming significantly less computing power. This efficiency is key, theoretically enabling high-level reasoning without draining excessive server resources, making advanced AI more accessible and sustainable (Meta, 2024).

AI in Your Line of Sight

While Muse Spark will be available across various Meta platforms, its integration with smart glasses feels particularly intuitive. Imagine pointing your Ray-Ban Meta or Oakley Meta glasses at a tangled mess of wires and instantly receiving a visual guide on how to set up your home theater system. Or, picture assembling flat-pack furniture with AI-powered, step-by-step visual coaching, ensuring you don't accidentally install a shelf upside down. The AI can 'read' instructions and guide you visually, eliminating the need to constantly consult a manual (TechCrunch, 2024).

Furthermore, Meta has invested heavily in the AI's health reasoning capabilities, collaborating with over 1,000 physicians. This means users could potentially generate interactive displays detailing the nutritional content of their meals or visualize the specific muscles activated during a workout. Think of it as a personal health consultant accessible with a glance. New examples of its utility could include real-time language translation during conversations, providing instant directions and points of interest when navigating an unfamiliar city, or identifying plant species during a hike with detailed information appearing before your eyes.

“This is about making AI a more natural and helpful part of your life, not just a tool you have to consciously access.”

Bridging the Hype Gap

The promise of advanced AI is always exciting, but the real test lies in its practical application. Artificial intelligence has a history of falling short of its lofty expectations, especially when moving from controlled lab environments to the unpredictable real world. Spotty Wi-Fi, challenging lighting conditions, and complex, real-life scenarios are the true benchmarks for any AI's effectiveness.

To gauge its real-world performance, a quick test involved using the Meta AI app in 'Thinking' mode with a photo of assorted audio equipment. The AI not only identified every component accurately but also suggested multiple connection configurations and correctly listed the necessary cables. This hands-on experience offered a glimpse into the potential when meta's new 'personal superintelligence' is integrated directly into smart glasses.

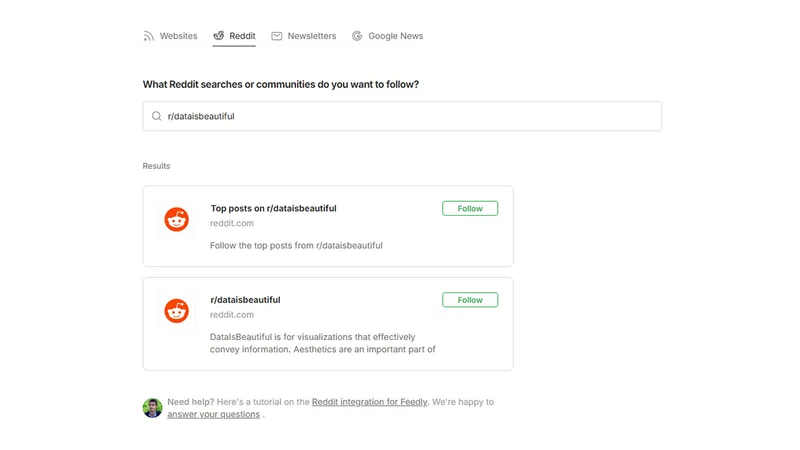

While Muse Spark is currently accessible via meta.ai and the Meta AI app, the smart glasses firmware and social media integrations are expected to roll out in the coming weeks. The future of personal computing might just be looking through a lens, powered by meta's new 'personal superintelligence'.

The integration of meta's new 'personal superintelligence' into wearables like smart glasses represents a significant step towards truly ambient computing. This technology could redefine how we access information and interact with our environment, making everyday tasks more intuitive and efficient. The potential for meta's new 'personal superintelligence' to become an indispensable part of daily life is immense, moving beyond mere convenience to offer genuine augmentation of human capabilities (The Verge, 2024).